One of the most anticipated features has just been added to RPCS3! High resolution rendering allows users to play at resolutions far exceeding what the PS3 could handle. If you thought your favourite PS3 games were starting to look a bit dated, just wait until you get to experience them in up to 10k! Although, we doubt many users will have the setup necessary to benefit from 10k today, emulation is all about preserving for tomorrow.

The video above showcases various popular titles with a side-by-side comparison from 720p (native PS3 resolution) to 4k rendering with 16x anisotropic filtering in RPCS3. The difference is quite incredible in titles that have high quality assets. There is a lot of detail that just wasn’t visible at 720p. This feature is available for every PlayStation 3 game running on RPCS3. The only exception being that it does not yet work with Strict Rendering Mode. Games that require this setting will have to wait a bit longer before they can benefit from high resolution rendering.

Performance

Rendering a modern PC game in high resolutions such as 4k, while beautiful, is quite taxing on your hardware and there is often a massive hit in performance. However, since most of the workload for RPCS3 is on the CPU and GPU usage is low, there is a lot of untapped performance just waiting to be used. All processing is done CPU side, and as far as the GPU is concerned it is simply rendering 2006 era graphics (yes, the PS3 is 11 years old now). We’re happy to report that anyone with a dedicated graphics card that has Vulkan support can expect identical performance at 4k.

Anisotropic Filtering (AF)

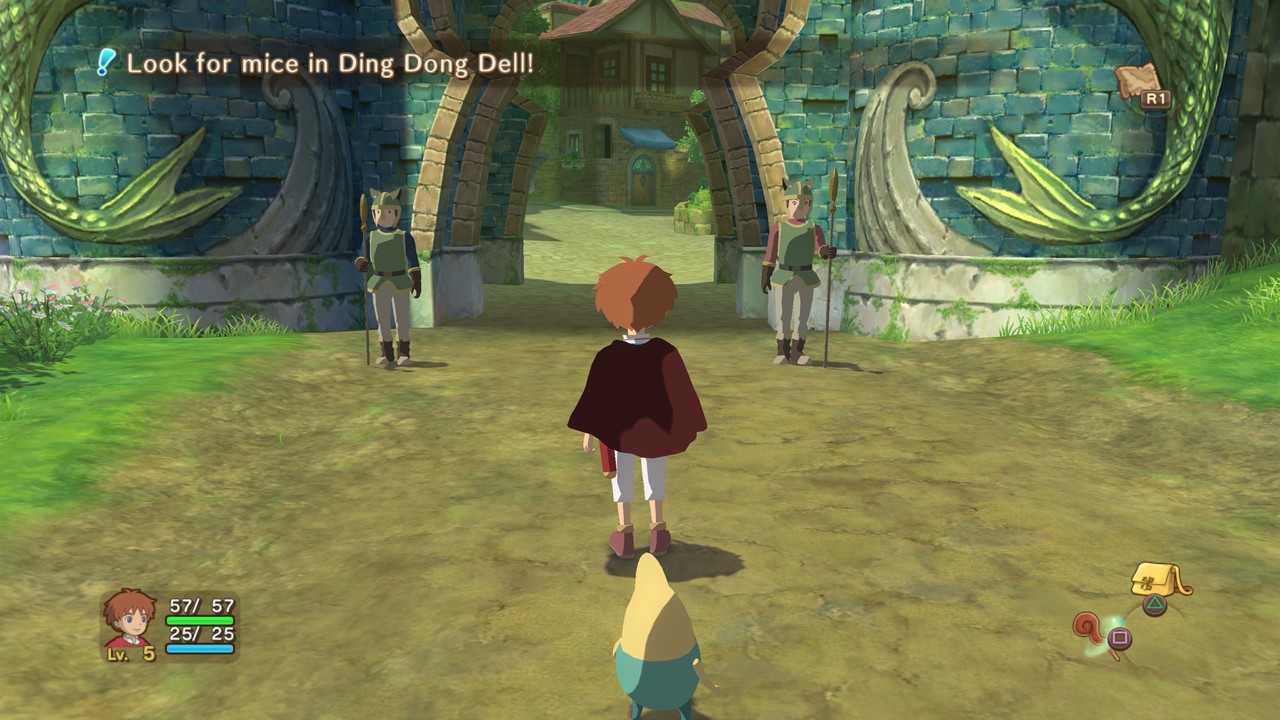

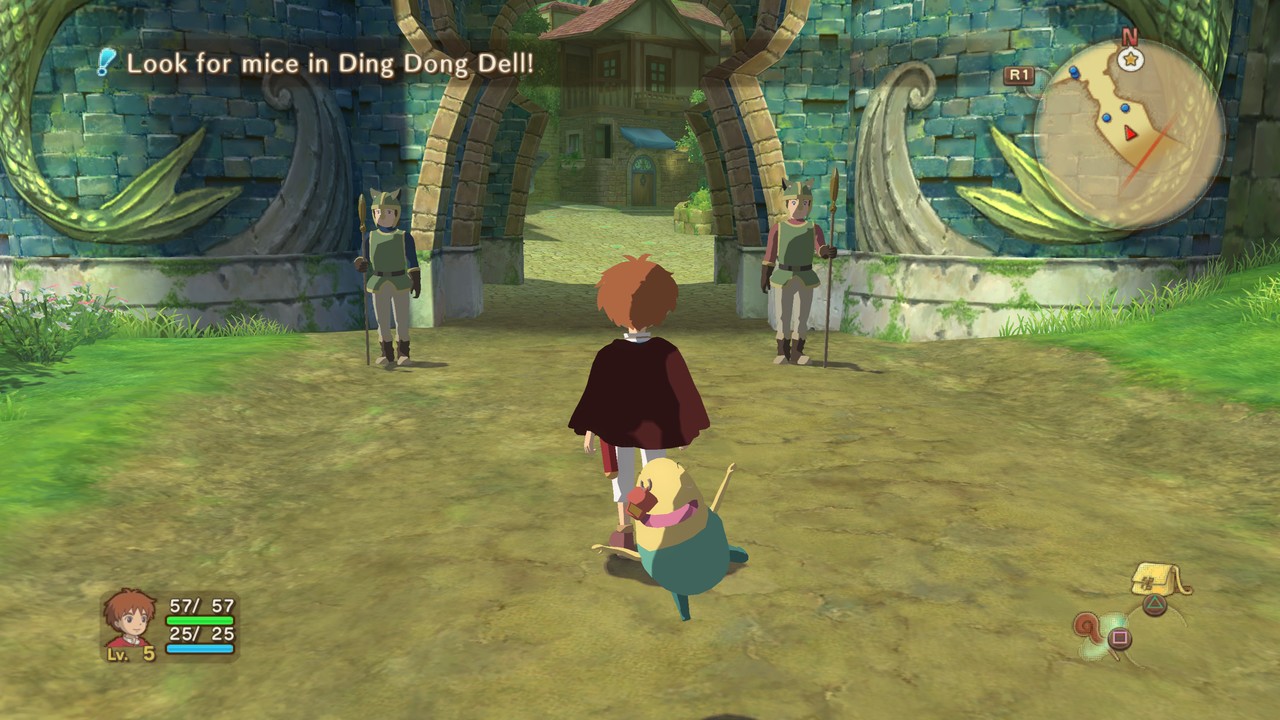

High resolution support wasn’t the only thing that was added in this update! Another reason for such a massive upgrade in visual fidelity is having full 16x AF support. This greatly improves how textures can look, especially when viewed at an angle. Take a look at the below screenshots of Ni no Kuni as an example of the default AF vs forced 16x. The difference is especially noticeable on the ground inside the gate.

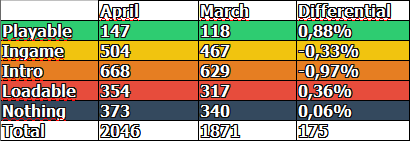

|

|